Flume的安装和使用

Flume的安装和使用

# 安装

# 必要条件

- Java1.8及更高版本

- 足够的内存,大部分情况channels都使用的是内存来缓存

- 足够的磁盘空间

- 目录的读写权限

Flume的安装很简单,直接下载然后解压使用即可。 下载地址:http://www-us.apache.org/dist/flume/1.7.0/apache-flume-1.7.0-bin.tar.gz (opens new window) 本次使用的版本是1.7.0,下载后将Flume解压到你喜欢的目录 这里我解压到了/usr/local/hadoop/flume-1.7.0目录中。为了以后使用命令方便,我将Flume加入了环境变量中 在/etc/profile中增加如下内容

export FLUME_HOME=/usr/local/hadoop/flume-1.7.0

export PATH=$PATH:$FLUME_HOME/bin

2

然后执行source /etc/profile使配置立即生效

# 使用Flume

Flume的原理及架构已经了解的情况下,我们知道要使用Flume需要先配置agent,这里参照官方文档http://flume.apache.org/FlumeUserGuide.html (opens new window)上的一个简单例子。

# 配置agent

其中,agent的配置如下:

# example.conf: A single-node Flume configuration

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

配置说明: 以上配置中指定了source,sinks,channels sources:使用的是netcat命令,监控localhost主机44444端口的输出内容 sink:这里是sink输出到logger中,也就是启动agent后的控制台页面上 channels:这里的管道使用的是内存来缓存信息,最大容量1000

# 启动agent

参照官网例子,得知启动命令如下

$ bin/flume-ng agent -n $agent_name -c conf -f conf/flume-conf.properties.template

需要传入你要启动的agent的name以及agent配置文件的路径,所以得到如下最终命令:

$ flume-ng agent --conf conf --conf-file /usr/local/hadoop/flume-1.7.0/conf-example/netcat-console.conf --name a1 -Dflume.root.logger=INFO,console

--conf-file:命令中指定了配置文件为**/usr/local/hadoop/flume-1.7.0/conf-example/netcat-console.conf** ,如果不加绝对路径表示当前目录 --name:指定了配置文件中agent的名称是a1 -Dflume.root.logger:指定了flume日志信息输出到控制台中 执行以上命令,得到如下效果:

[root@hadoop1 conf-example]# flume-ng agent --conf conf --conf-file /usr/local/hadoop/flume-1.7.0/conf-example/netcat-console.conf --name a1 -Dflume.root.logger=INFO,console

Info: Including Hadoop libraries found via (/usr/local/hadoop/hadoop-2.7.4/bin/hadoop) for HDFS access

Info: Including Hive libraries found via () for Hive access

+ exec /usr/java/jdk1.8.0_131/bin/java -Xmx20m -Dflume.root.logger=INFO,console -cp 'conf:/usr/local/hadoop/flume-1.7.0/lib/*:/usr/local/hadoop/hadoop-2.7.4/etc/hadoop:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/common/lib/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/common/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/hdfs:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/hdfs/lib/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/hdfs/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/yarn/lib/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/yarn/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/mapreduce/lib/*:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/mapreduce/*:/usr/local/hadoop/hadoop-2.7.4/contrib/capacity-scheduler/*.jar:/lib/*' -Djava.library.path=:/usr/local/hadoop/hadoop-2.7.4/lib org.apache.flume.node.Application --conf-file /usr/local/hadoop/flume-1.7.0/conf-example/netcat-console.conf --name a1

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/local/hadoop/flume-1.7.0/lib/slf4j-log4j12-1.6.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/hadoop/hadoop-2.7.4/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

17/10/18 23:35:32 INFO node.PollingPropertiesFileConfigurationProvider: Configuration provider starting

17/10/18 23:35:32 INFO node.PollingPropertiesFileConfigurationProvider: Reloading configuration file:/usr/local/hadoop/flume-1.7.0/conf-example/netcat-console.conf

17/10/18 23:35:32 INFO conf.FlumeConfiguration: Added sinks: k1 Agent: a1

17/10/18 23:35:32 INFO conf.FlumeConfiguration: Processing:k1

17/10/18 23:35:32 INFO conf.FlumeConfiguration: Processing:k1

17/10/18 23:35:32 INFO conf.FlumeConfiguration: Post-validation flume configuration contains configuration for agents: [a1]

17/10/18 23:35:32 INFO node.AbstractConfigurationProvider: Creating channels

17/10/18 23:35:32 INFO channel.DefaultChannelFactory: Creating instance of channel c1 type memory

17/10/18 23:35:32 INFO node.AbstractConfigurationProvider: Created channel c1

17/10/18 23:35:32 INFO source.DefaultSourceFactory: Creating instance of source r1, type netcat

17/10/18 23:35:32 INFO sink.DeflaultSinkFactory: Creating instance of sink: k1, type: logger

17/10/18 23:35:32 INFO node.AbstractConfigurationProvider: Channel c1 connected to [r1, k1]

17/10/18 23:35:32 INFO node.Application: Starting new configuration:{ sourceRunners:{r1=EventDrivenSourceRunner: { source:org.apache.flume.source.NetcatSource{name:r1,state:IDLE} }} sinkRunners:{k1=SinkRunner: { policy:org.apache.flume.sink.DefaultSinkProcessor@1b4b27c counterGroup:{ name:null counters:{} } }} channels:{c1=org.apache.flume.channel.MemoryChannel{name: c1}} }

17/10/18 23:35:32 INFO node.Application: Starting Channel c1

17/10/18 23:35:32 INFO instrumentation.MonitoredCounterGroup: Monitored counter group for type: CHANNEL, name: c1: Successfully registered new MBean.

17/10/18 23:35:32 INFO instrumentation.MonitoredCounterGroup: Component type: CHANNEL, name: c1 started

17/10/18 23:35:32 INFO node.Application: Starting Sink k1

17/10/18 23:35:32 INFO node.Application: Starting Source r1

17/10/18 23:35:32 INFO source.NetcatSource: Source starting

17/10/18 23:35:32 INFO source.NetcatSource: Created serverSocket:sun.nio.ch.ServerSocketChannelImpl[/127.0.0.1:44444]

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

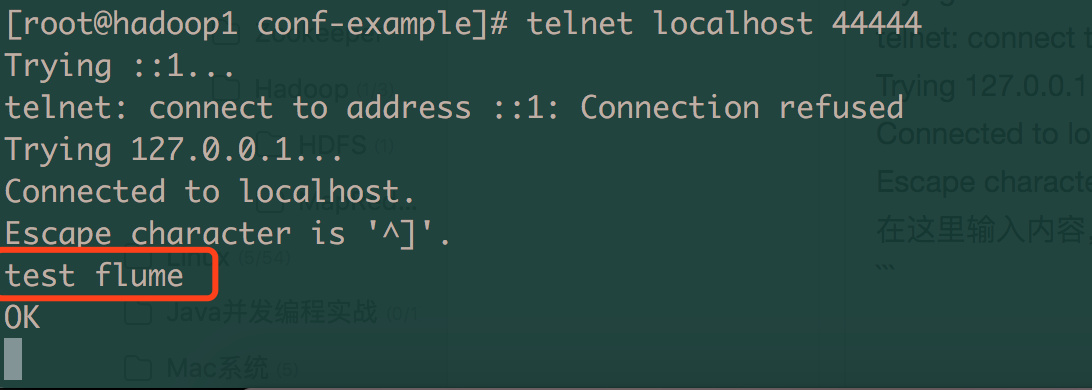

打开一个新终端窗口,连接到localhost的44444端口。这里需要你的环境中安装了telnet工具,如果没装可以使用yum install命令进行安装

[root@hadoop1 conf-example]# telnet localhost 44444

Trying ::1...

telnet: connect to address ::1: Connection refused

Trying 127.0.0.1...

Connected to localhost.

Escape character is '^]'.

在这里输入内容,然后回车即可

2

3

4

5

6

7

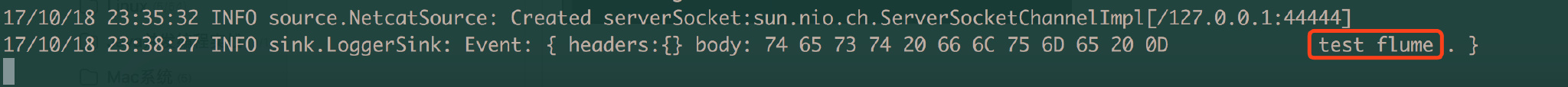

# 验证结果

分别查看telnet窗口输入的内容和启动agent终端窗口的输出结果